AI 2027

Yesterday, a new pdf dropped. The report was authored by five Manifold users (some of whom are better known for other things): Daniel Kokotajlo, Scott Alexander, Thomas Larsen, Eli Lifland, and Romeo Dean.

This report, called “AI 2027,” was released primarily as an interactive website by the AI Futures Project, and contains a hybrid forecast and fictionalized scenario for the next few years of AI developments.

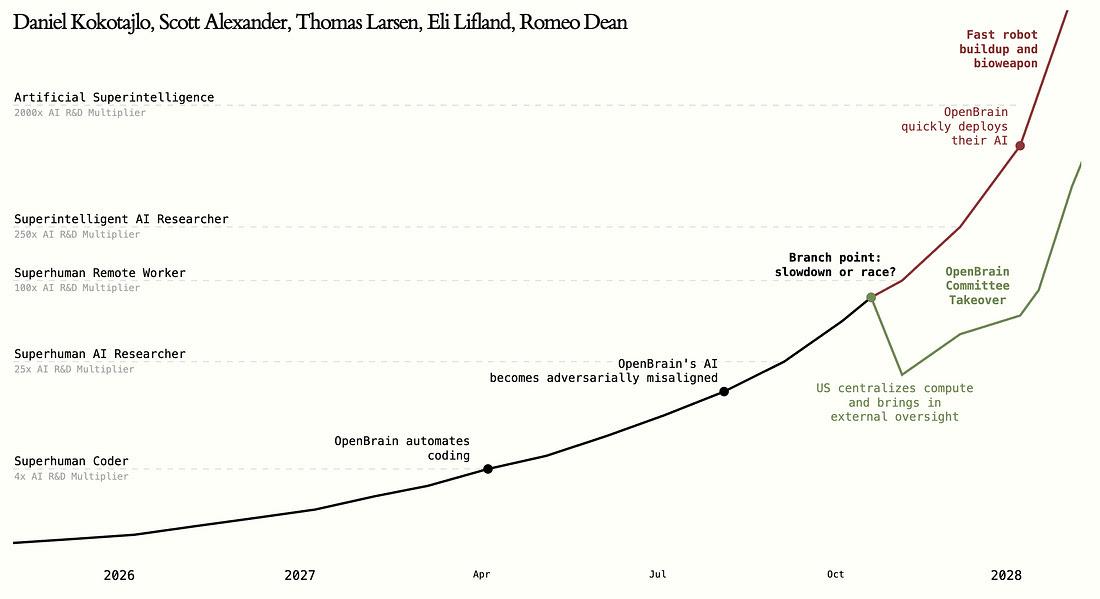

Graphical summary of AI-2027’s forecast. Lines going up.

This report got press coverage from the NYT and resulted in Scott Alexander’s (perhaps) first podcast appearance. While the report is best interpreted as a single potential scenario (that represents the authors’ “best guess about what [the near-term impact of AI] might look like”), Manifold users are skeptical that these forecasts will be borne out as described.

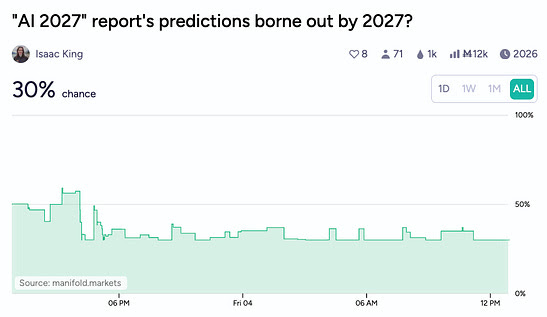

The above market resolves based on consensus from Manifold moderators at the end of 2026 as to whether the AI-2027 report’s forecast has been more or less correct to that point:

Resolution will be via a poll of Manifold moderators. If they’re split on the issue, with anywhere from 30% to 70% YES votes, it’ll resolve to the proportion of YES votes. Otherwise it resolves YES/NO.

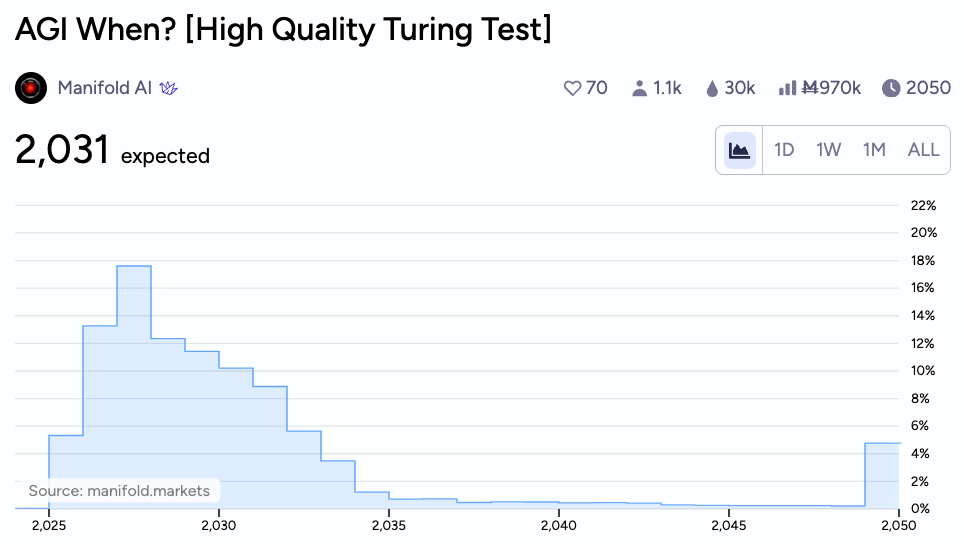

Part of this skepticism is natural, given that AI-2027 has given a timeline for something resembling AGI that is much faster than Manifold’s own market estimate. The report forecasts AGI (or at least several intersecting technological developments that equivalent Manifold markets would almost certainly characterize as such) by the end of 2027. Manifold does agree that 2027 is the modal year by which we could expect AGI, but (1) most of the probability space lies beyond that, with a median date of around 2029, and (2) the criteria for this market is a high quality Turing test, a lower bar than the ones AI-2027 thinks AI will clear.

Hopefully Manifold users will, as usual, do a great job of operationalizing the falsifiable predictions from the AI-2027 report into well-defined individual markets.|